I am doing stuff at the moment so ask away my MS means 6 month much will be forgotten so ask when still fresh.

The dataset is what matters and so you have an exact ref its important to also have the same

https://drive.google.com/file/d/1dreV5fBIwzdcJnXEueYwc4NeWCyufdS-/view?usp=share_link

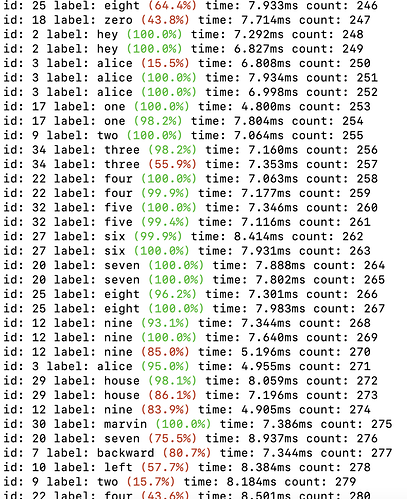

I think actually from training I am a tad short by maybe another 50-70% of data as training accuracy is quite a bit above validation. Not to bad but it is showing slight signs of over-fitting.

When training you have to take the metrics with a grain of salt as we don’t know how narrow the classification is.

An analogy would be to sit next to a swimming pool and with 100% accuracy hit the pool with a tennis ball, but is that as accurate as hitting a dartboard bullseye at the same distance.

In a standard binary classification the choice of data can give very little cross entropy and x2 huge pools that yes 100% training accuracy is hard not to get whilst in use as a KWS it will still be steaming ![]()

There is nothing clever about a classification model as it classifies based on the classes you give it.

Hence why I use a far more classifications 'wanted_words=‘heymarvin,noise,unk,h1m1,h2m1,h1m2,h2m2’` Index[0] Heymarvin the KW, Index[1] noise or call it voice_silence as its a classification of no voice, Index[2] unk unknown voice which contains similar syllable words that are phonetically different to the KW, h1m2 (Hey1Marvin2) are phonetically similar Hey & Marvin combinations that don’t contain Hey Marvin to deliberately create cross entropy with the KW and make that swimming pool small, whilst being just unknown subsets not contained in unk.

Most problems are due to volume level as there are two problems as often mics are not very sensitive and AGC is not set automatically on some and also when we do speak its often not realised how much we stress the opening phone to the rest of a sentence of in amplitude of several orders. The ‘H’ of Hey can be 4x or more in amplitude than the rest of the word.

A print(lvl) in the above code should show this and you can watch and add debug as you go.

Also on KW if you use soundfile

sf.write('kw.wav', rec_rbuff, 16000) you can have a look at the wavfile as a wav file after.

Python is great for RAD, Research or a hobby but hopefully someone will create TFLite & Onnx runners in either Rust or C/C++ as this DSP is often repeated and the focus platform is embedded, the code here is purely a mix of hobby & research.

@shellcode

You could test against a wav dataset with an adaption of this code as doesn’t have to be by mic

import tensorflow as tf

import soundfile as sf

import numpy as np

import glob

import os

from playsound import playsound

import sys,tty,termios

def getkey():

old_settings = termios.tcgetattr(sys.stdin)

tty.setcbreak(sys.stdin.fileno())

try:

while True:

b = os.read(sys.stdin.fileno(), 3).decode()

if len(b) == 3:

k = ord(b[2])

else:

k = ord(b)

key_mapping = {

127: 'backspace',

10: 'return',

32: 'space',

9: 'tab',

27: 'esc',

65: 'up',

66: 'down',

67: 'right',

68: 'left'

}

return key_mapping.get(k, chr(k))

finally:

termios.tcsetattr(sys.stdin, termios.TCSADRAIN, old_settings)

def kw_detect(rec, sample_rate ,duration):

rec = np.reshape(rec, (1, int(sample_rate * duration)))

#rec = np.multiply(rec, 8)

# Make prediction from model

interpreter1.set_tensor(input_details1[0]['index'], rec)

# set input states (index 1...)

for s in range(1, len(input_details1)):

interpreter1.set_tensor(input_details1[s]['index'], inputs1[s])

interpreter1.invoke()

output_data = interpreter1.get_tensor(output_details1[0]['index'])

# get output states and set it back to input states

# which will be fed in the next inference cycle

for s in range(1, len(input_details1)):

# The function `get_tensor()` returns a copy of the tensor data.

# Use `tensor()` in order to get a pointer to the tensor.

inputs1[s] = interpreter1.get_tensor(output_details1[s]['index'])

return output_data[0][1]

# Parameters

duration = 0.020

sample_rate = 16000

num_channels = 1

kw_path = "../ProjectEars/dataset/trim-combine/h1"

# Load the TFLite model and allocate tensors.

interpreter1 = tf.lite.Interpreter(model_path="../GoogleKWS/models2/crnn-quant/tflite_non_stream/stream_state_external.tflite")

interpreter1.allocate_tensors()

# Get input and output tensors.

input_details1 = interpreter1.get_input_details()

output_details1 = interpreter1.get_output_details()

inputs1 = []

for s in range(len(input_details1)):

inputs1.append(np.zeros(input_details1[s]['shape'], dtype=np.float32))

start = 0

count = 0

kw_files = glob.glob(os.path.join(kw_path, '*.wav'))

if len(kw_files) == 0:

print('No files found')

for kw_wav in kw_files:

key_quit = False

frame = 0

found_start = False

found_end = False

data, samplerate = sf.read(kw_wav, dtype='float32')

print(kw_wav, data.shape)

while frame < 100:

start = 320 * frame

rec = data[start:start + 320]

#print(rec.shape, start)

if len(rec) < 320:

break

kw_prob = kw_detect(rec, sample_rate ,duration)

if kw_prob > 0.01: #and found_start == False:

print(kw_wav, kw_prob, frame)

playsound(kw_wav)

try:

while True:

k = getkey()

if k == 'd':

#os.remove(kw_wav)

key_quit = True

break

else:

print(k)

key_quit = True

break

except (KeyboardInterrupt, SystemExit):

os.system('stty sane')

print('stopping.')

if key_quit == True:

break

frame += 1

for s in range(100):

rec = np.zeros(320, dtype=np.float32)

kw_prob = kw_detect(rec, sample_rate ,duration)

count += 1

print(count)

And noticed I was recording backwards ![]() so try this instead

so try this instead

import tensorflow as tf

import sounddevice as sd

import soundfile as sf

import numpy as np

import threading

import uuid

def softmax_stable(x):

return(np.exp(x - np.max(x)) / np.exp(x - np.max(x)).sum())

def sd_callback(rec, frames, time, status):

global gain, max_rec, kw_hit, kw_hit_rbuff, vad_hit_rbuff, rec_rbuff, rec_samples, rec_out, kw_samples

# Notify if errors

if status:

print('Error:', status)

rec = np.reshape(rec, (1, rec_samples))

rec = np.multiply(rec, gain)

# Make prediction from model

interpreter1.set_tensor(input_details1[0]['index'], rec)

# set input states (index 1...)

for s in range(1, len(input_details1)):

interpreter1.set_tensor(input_details1[s]['index'], inputs1[s])

interpreter1.invoke()

output_data = interpreter1.get_tensor(output_details1[0]['index'])

# get output states and set it back to input states

# which will be fed in the next inference cycle

for s in range(1, len(input_details1)):

# The function `get_tensor()` returns a copy of the tensor data.

# Use `tensor()` in order to get a pointer to the tensor.

inputs1[s] = interpreter1.get_tensor(output_details1[s]['index'])

lvl = np.max(np.abs(rec))

if lvl > max_rec:

max_rec = lvl

rec_rbuff = np.roll(rec_rbuff, -rec_samples)

rec_rbuff[len(rec_rbuff) - rec_samples:len(rec_rbuff)] = rec

out_softmax = softmax_stable(output_data[0])

kw_hit_rbuff = np.roll(kw_hit_rbuff, 1)

kw_hit_rbuff[0] = out_softmax[0]

kw_prob = np.mean(kw_hit_rbuff)

if out_softmax[0] > 0.95:

vad_hit_rbuff = np.multiply(vad_hit_rbuff, 0)

vad_hit_rbuff = np.roll(vad_hit_rbuff, 1)

vad_hit_rbuff[0] = out_softmax[1]

vad_prob = np.mean(vad_hit_rbuff)

if vad_prob > 0.95:

print("Vad:", vad_prob, kw_prob, lvl)

kw_hit = False

kw_hit_rbuff = np.multiply(kw_hit_rbuff, 0)

max_rec = 0.0

kw_count = 0

kw_prob = 0

rec_out = False

if kw_prob > 0.90:

if kw_hit == False:

print("Marvin:", kw_prob, lvl)

print(kw_hit_rbuff)

kw_hit = True

if rec_out == False:

sf.write(uuid.uuid4().hex + '.wav', rec_rbuff[0:16000], 16000)

rec_out = True

# Parameters

kw_duration = 1.0

kw_latency = 0.6

rec_duration = 0.020

vad_duration = 0.20

sample_rate = 16000

rec_samples = int((sample_rate * kw_duration) * rec_duration)

vad_hit_samples = int((sample_rate * kw_duration) / ((sample_rate * kw_duration) * vad_duration))

kw_hit_samples = int((sample_rate * kw_duration) / ((sample_rate * kw_duration) * rec_duration))

kw_samples = int(sample_rate * kw_duration)

latency_samples = int(kw_duration * ( kw_latency / rec_duration)) * rec_samples

num_channels = 1

gain = 5

max_rec = 0.0

kw_hit = False

rec_out = False

kw_hit_rbuff = np.zeros(kw_hit_samples, dtype=np.float32)

vad_hit_rbuff = np.zeros(vad_hit_samples, dtype=np.float32)

rec_rbuff = np.zeros(kw_samples + latency_samples, dtype=np.float32)

sd.default.latency= ('high', 'high')

sd.default.dtype= ('float32', 'float32')

# Load the TFLite model and allocate tensors.

interpreter1 = tf.lite.Interpreter(model_path="../GoogleKWS/models2/crnn/quantize_opt_for_size_tflite_stream_state_external/stream_state_external.tflite")

interpreter1.allocate_tensors()

# Get input and output tensors, really should be static copies to use as KW resets

input_details1 = interpreter1.get_input_details()

output_details1 = interpreter1.get_output_details()

inputs1 = []

for s in range(len(input_details1)):

inputs1.append(np.zeros(input_details1[s]['shape'], dtype=np.float32))

# Start streaming from microphone

with sd.InputStream(channels=num_channels,

samplerate=sample_rate,

blocksize=int(rec_samples),

callback=sd_callback):

threading.Event().wait()

Its sort of working back to front as would act as a cancel signal if not hit as the 1st detection latency is extremely low but until you have analysed all you never know.

It does give direct wav’s so you can actually see and hear what you are getting.

Again much is dataset as forgot to mention the dataset is also mixed with I think its -10.6 db noise (my memory lols) but because of the dataset and not the model it should be more resilient to small levels of noise.

Really it needs a head2head false positives/negatives on Librispeech clean and with it modified with -10.6 noise (dirty)

Still a 1st attempt dataset though so will get some optimisations

For google-kws I just create some source files to source filename on the cli

so if using a venv source venv/bin/activate

then setup.text to get the paths

KWS_PATH=$PWD

DATA_PATH=$KWS_PATH/data2

MODELS_PATH=$KWS_PATH/models2

CMD_TRAIN="python -m kws_streaming.train.model_train_eval"

Then the model type which was with the above dataset link and models provided

$CMD_TRAIN \

--data_url '' \

--data_dir $DATA_PATH/ \

--train_dir $MODELS_PATH/crnn/ \

--split_data 0 \

--wanted_words 'heymarvin,noise,unk,h1m1,h2m1,h1m2,h2m2' \

--mel_upper_edge_hertz 7600 \

--how_many_training_steps 20000,20000,20000,20000 \

--learning_rate 0.001,0.0005,0.0001,0.00002 \

--window_size_ms 40.0 \

--window_stride_ms 20.0 \

--mel_num_bins 40 \

--dct_num_features 20 \

--resample 0.0 \

--background_frequency 0.0 \

--alsologtostderr \

--train 1 \

--lr_schedule 'exp' \

--use_spec_augment 1 \

--time_masks_number 2 \

--time_mask_max_size 10 \

--frequency_masks_number 2 \

--frequency_mask_max_size 5 \

--feature_type 'mfcc_op' \

--fft_magnitude_squared 1 \

crnn \

--cnn_filters '16,16' \

--cnn_kernel_size '(3,3),(5,3)' \

--cnn_act "'relu','relu'" \

--cnn_dilation_rate '(1,1),(1,1)' \

--cnn_strides '(1,1),(1,1)' \

--gru_units 256 \

--return_sequences 0 \

--dropout1 0.1 \

--units1 '128,256' \

--act1 "'linear','relu'" \

--stateful 0