When using PiDTLN’s ns.py (PiDTLN/ns.py at main · SaneBow/PiDTLN · GitHub) , I am able to run ns according to set up instructions. I’ve heard my own voice through loopback to speakers, but no matter which audio device I choose in Rhasspy (using arecord), I don’t get any audio data.

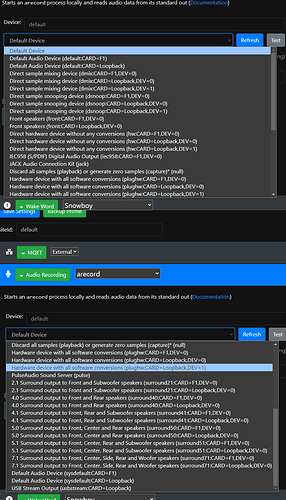

F1 is my actual input device. Here is the full list available:

I’ve read through this thread: DTLN: Realtime Machine Learning based Noise Suppression / AEC on Raspberry Pi - #7 by fastjack

But those solutions were for getting PiDTLN to run and I’m not seeing anything to try for getting a working ns.py to Rhasspy input.

For someone who has a working PiDTLN, can you share how your rhasspy is configured to use the loopback device? Were there any extra steps you had to do after getting PiDTLN running? My best guess is I’m missing some loopback setting that gets the input back to another input?

Thank you in advance, much appreciated.

If its with docker then read Problem with audio input (docker image) - #4 by rolyan_trauts

PiDTLN is actually really great and even though it creates much less artifacts than RnnNoise but will still create artifacts.

Really KWS & ASR need to be trained on a PiDTLN dataset but isn’t so sadly even though it could be implemented it has been ommitted so those artefacts could reduce recognition.

The bring your own to the party is always going to be at a dissadvantage to a dedicated software/hardware solution as you can train in the systems fingerprint and be even more accurate whilst the same fingerprint in a non specifically trained system can work the oppisite way and reduce accuracy.

Ah that makes sense. Kind of funny that accuracy could go down with cleaner audio input lol

I hate messing with those types of sound settings - feels like shooting in the dark with how many audio subsystems i’ve installed on my Pi to get something that works. I’m going to try an MQTT → wav output approach to get the audio, do whatever processing, then send back to MQTT.

TY for your guidance