I’d like to share my experience in the last two month with Rhasspy and different configurations.

My main objective is to have a simple voice system (in the living room or kitchen) to control my home automation (via Home Assistant) and a simple shopping list (made custom by me).

It’s been a long time since I knew Rhasspy but I never had the occasion to use it.

I started with a simple Raspberry pi 4 with Jabra Speak 510 to test the installation, first configurations and understand how it works. All good.

All my tests were in a silent environment, so the wake up word was understood correctly and so the intents. I found that the intent API of Home Assistant are not available anymore but I didn’t searched for a solution since a simple automation via rest API was good and simple enough.

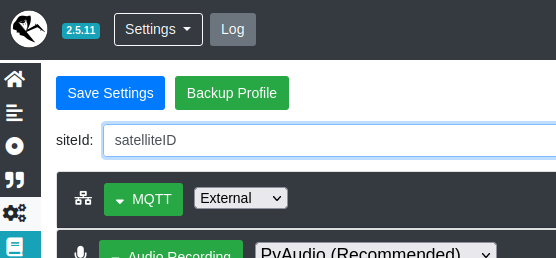

Then I moved to a base/satellite configuration .The base is always a Rhasspy instance (docker) in my central server (NUC i7).

For the Satellite I used 3 different configuration:

- Raspberry pi 4 with both PS3 Eye and Jabra

- ESP32 with INMP441/MAX98357A (custom made)

- M5 Echo

For the last two setup I used the great ESP32-Rhasspy-Satellite made by Romkabouter (https://github.com/Romkabouter/ESP32-Rhasspy-Satellite/) with some small modification made by me (I added a LED ring and some other small stuffs).

The Rasp4 configuration was simple enough, I used the official tutorial (Tutorials - Rhasspy).

I used the internal MQTT broker (in the Base instance) to avoid any interference with my main MQTT broker and have a dedicated one.

I think that a dedicated Raspberry (especially in these days with Raspberry shortage) is quite oversized if used as a simple MQTT microphone.

So I moved to a simpler (hardware wise) configuration using ESP32 with I2S mic and speaker: the response is good enough but my tests were always in a silent environment. I have to test it in a louder room (I have 2 small children so…) and understand if the INMP441 (or maybe a dual channel mic) is good enough as a “panoramic microphone”. Do u have any experience on this? I’m planning to build a 3d printed enclose, any tips for the microphone placing (top or bottom, orientation, …)?

The MAX98357A (with a small 3W speaker) was good enough for a minimum sound feedback and vocals (I tried some intent like “what time is it”).

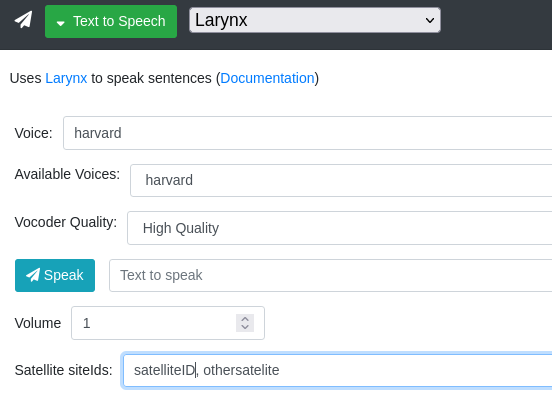

I had a problem with Hermes MQTT: if the Base and the Satellite have different IDs, I didn’t find a way to redirect the sound output to the satellite, since the messages have different topic. On this regard I found this (Speech from Base to Satellite) where the solution was to mirror all the base MQTT messages to the satellite topic, but this won’t work if there are more than 1 satellite (or all the satellite will reproduce the message!). Do u have any considerations on this? Maybe a dialogue machine?

Last test was the M5 echo but I think that this device is good for educational porpoise but completely useless in a real environment: the mic radius is very small and the speaker is very very weak. Maybe with a battery, as a sort of personal device… don’t know. Anyway: it was funny to add also this to my electronic toys harem lol

If any of u are interested in specific details pls let me know, I wrote this just to share my thoughts and have some feedback from you.

once tested

once tested